Understanding Dynamic Programming – A Complete Guide

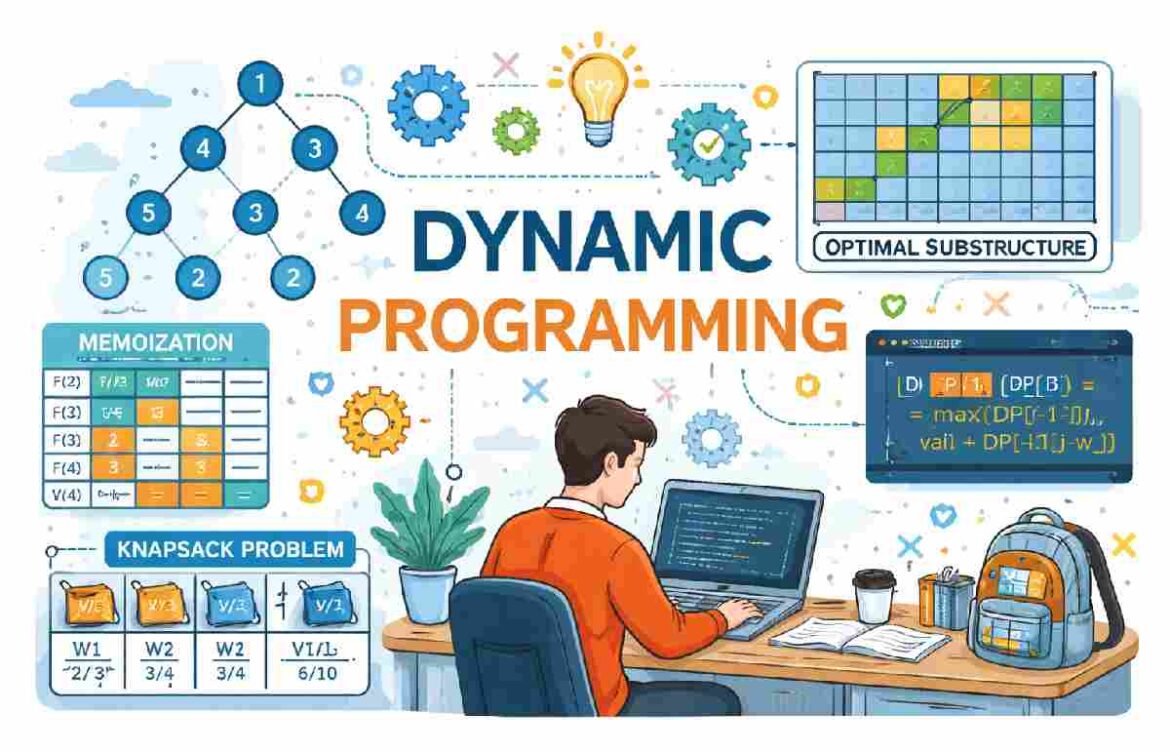

Dynamic Programming is an algorithmic technique with the following properties. It is mainly an optimization over plain recursion. Wherever we see a recursive solution with repeated calls for the same contributions, we can optimize it using Dynamic Programming.

The idea is to store the grades of subproblems so that we do not have to re-compute them when needed later. This humble optimization typically reduces time complexities from exponential to polynomial.

Some widespread problems solved using Dynamic Programming are Fibonacci Numbers, Diff Utility (Longest Common Subsequence), Bellman-Ford Shortest Path, Floyd Warshall, Edit Distance, and Matrix Chain Multiplication.

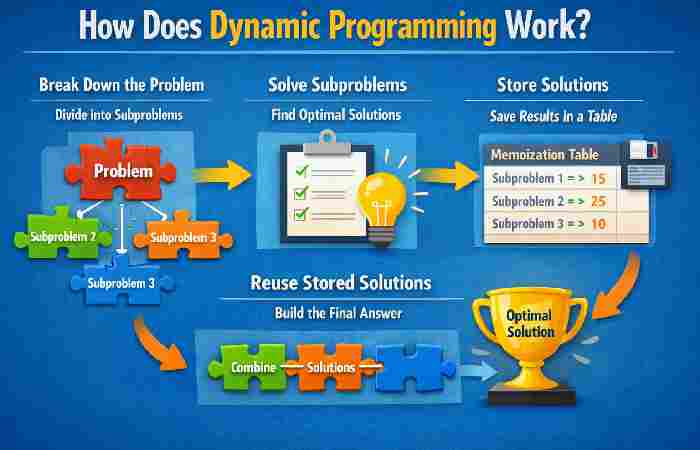

How Does Dynamic Programming Work?

Dynamic programming works by breaking down multifaceted problems into simpler subproblems. Then, finding optimal answers to these subproblems. Memorization is a technique that saves the outcomes of these procedures so that the corresponding answers do not need to be computed when they are later wanted. Saving solutions saves time when computing subproblems that have already been encountered.

Dynamic programming can be achieved using two approaches:

Top-down approach

In computer science, problems are resolved by recursively formulating solutions, employing the answers to the problems’ subproblems. If the answers to the subproblems overlap, they may remain memoized or kept in a table for later use. The top-down approach follows the strategy of memorization. The memoization process is equivalent to adding the recursion and caching steps. The difference between recursion and caching is that recursion requires calling the function directly, whereas caching requires preserving the intermediate results.

The top-down strategy has many benefits, including the following:

The top-down approach is easy to understand and implement. In this approach, problems are broken down into smaller parts, which help users identify what needs to be done. With each step, more significant, more complex problems become smaller, less complicated, and, therefore, easier to solve. Some parts may even be reusable for the same problem.

It allows for subproblems to be solved upon request. The top-down approach will enable problems to be broken down into smaller parts and their solutions stored for reuse. Users can then query solutions for each part.

It is also easier to debug. Segmenting problems into small parts allows users to follow the solution quickly and determine where an error might have occurred.

Disadvantages of the top-down approach include:

The top-down approach uses the recursion technique, which occupies more memory in the call stack. It leads to reduced overall performance. Additionally, when the recursion is too deep, a stack overflow occurs.

Bottom-up approach

In the bottom-up method, once a solution to a problem is written in terms of its subproblems in a way that loops back on itself, users can rewrite the problem by solving the smaller subproblems first and then using their solutions to solve the larger subproblems.

Unlike the top-down approach, the bottom-up approach removes the recursion. Thus, there is neither stack overflow nor overhead from the recursive functions. It also allows for saving memory space. Removing recursion decreases the time complexity of recursion due to recalculating the same values.

The advantages of the bottom-up approach include the following:

It makes decisions about small reusable subproblems and then decides how they will be put together to create a significant problem.

It removes recursion, thus promoting the efficient use of memory space. Additionally, this also leads to a reduction in timing complexity.

Dynamic Programming (DP) is one such concept in computer science that initially is something to be feared, but then you get it, and it enables you to look at the approach to solving problems in a completely different way. DP is a skill that you need, whether you are training to interview with a coding company, sharpening your algorithmic thinking skills, or you are developing an efficient system.

We must simplify it, put it down plain, humanly, without any undue complexity, without any complexities that do not burden us with anything useful.

Dynamic Programming comes to the rescue by:

Avoiding repeated work

Improving performance

Reducing exponential time complexity to the form of a polynomial time.

Resolver Properties of Dynamic Programming.

A problem to use DP has to meet two significant properties:

- Overlapping Subproblems

The issue could be divided into smaller fragments, which could be used several times.

- Optimal Substructure

Optimal solutions of a problem can be constructed with optimal solutions of sub problems.

Recursion vs Dynamic Programming

| Feature | Recursion | Dynamic Programming |

| Computation | Repeats calculations | Stores results (memoization) |

| Time Complexity | Often exponential | Reduced to polynomial |

| Memory Usage | Low | Higher (due to storage) |

| Efficiency | Slower | Faster |

| Example | Fibonacci (naive) | Fibonacci (optimized DP) |

Fibonacci Example (Classic DP Problem)

Without Dynamic Programming (Recursive)

def fib(n):

if n <= 1:

return n

return fib(n-1) + fib(n-2)

Problem: Recalculates the same values many times.

With Dynamic Programming (Memoization)

def fib(n, dp={}):

if n in dp:

return dp[n]

if n <= 1:

return n

dp[n] = fib(n-1, dp) + fib(n-2, dp)

return dp[n]

Now each value is calculated once and reused.

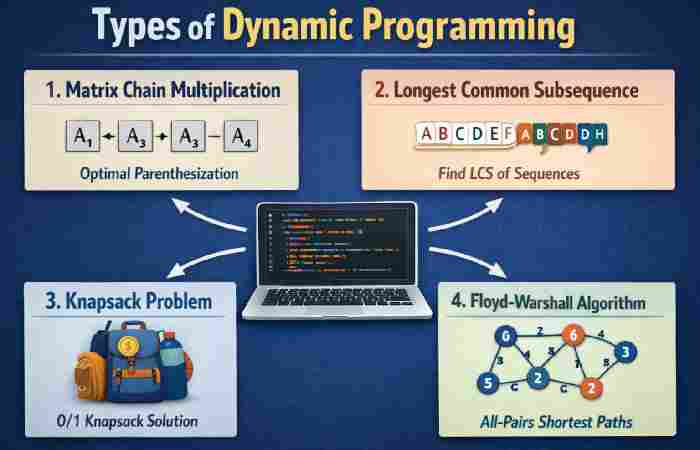

Types of Dynamic Programming

Dynamic Programming can remains implemented in two main ways:

- Memoization (Top-Down Approach)

- Uses recursion

- Stores results in a cache (dictionary or array)

- Easy to implement

- Tabulation (Bottom-Up Approach)

- Uses iteration

- Builds a table from smallest to largest subproblems

- Often more efficient in practice

Memoization vs Tabulation

| Aspect | Memoization (Top-Down) | Tabulation (Bottom-Up) |

| Approach | Recursive | Iterative |

| Speed | Slightly slower | Faster |

| Stack Usage | Uses recursion stack | No recursion stack |

| Implementation | Easier | Slightly complex |

| Use Case | When recursion is intuitive | When iteration is simpler |

Steps to Solve a DP Problem

Here’s a structured approach you can follow:

- Identify if it’s a DP Problem

Ask:

- Are there overlapping subproblems?

- Does it have optimal substructure?

- Define the State

What variables represent your problem?

- Formulate the Recurrence Relation

How does the current state depend on previous states?

- Implement (Memoization or Tabulation)

- Optimize (Optional)

Reduce space complexity if possible.

Popular Dynamic Programming Problems

Here are some classic DP problems you’ll encounter:

| Problem Name | Description |

| Fibonacci Sequence | Basic introduction to DP |

| Knapsack Problem | Optimize value with weight constraints |

| Longest Common Subsequence | Compare two sequences |

| Longest Increasing Subsequence | Find ordered subsequence |

| Coin Change Problem | Minimum coins to make a value |

| Matrix Chain Multiplication | Optimal way to multiply matrices |

0/1 Knapsack Problem (Example)

Problem:

You are given weights and values of items. Maximize value without exceeding weight capacity.

DP Table Representation:

| Item \ Capacity | 0 | 1 | 2 | 3 | 4 |

| Item 1 | 0 | 1 | 1 | 1 | 1 |

| Item 2 | 0 | 1 | 2 | 3 | 3 |

| Item 3 | 0 | 1 | 2 | 3 | 4 |

Each cell represents the best value achievable.

Coin Change Problem

Goal:

Find minimum coins needed to make a target value.

Example:

| Amount | Minimum Coins |

| 0 | 0 |

| 1 | 1 |

| 2 | 1 |

| 3 | 2 |

| 4 | 2 |

Real-Life Applications of Dynamic Programming

Dynamic Programming is not just for exams—it’s used everywhere:

Navigation Systems

- Finding shortest paths (like Google Maps)

Finance

- Portfolio optimization

Bioinformatics

- DNA sequence alignment

Gaming

- AI decision-making

Text Processing

- Spell check and auto-correct

Time Complexity Improvements

| Approach | Time Complexity (Fibonacci) |

| Naive Recursion | O(2ⁿ) |

| Memoization | O(n) |

| Tabulation | O(n) |

Space Optimization in DP

Sometimes, you don’t need the full table.

Example:

Instead of storing entire Fibonacci array:

a, b = 0, 1

for i in range(2, n+1):

a, b = b, a + b

Space reduced from O(n) to O(1)

Common Mistakes to Avoid

- Not identifying overlapping subproblems

- Writing recursion without memoization

- Using DP where it’s not needed

- Forgetting base cases

- Overcomplicating state definitions

Tips to Master Dynamic Programming

- Start with simple problems (Fibonacci, climbing stairs)

- Practice patterns (knapsack, sequences, partitions)

- Visualize with tables

- Convert recursion → memoization → tabulation

- Practice regularly

DP Patterns You Should Know

| Pattern Type | Example Problems |

| Linear DP | Fibonacci, Climbing Stairs |

| Grid DP | Unique Paths, Minimum Path Sum |

| Knapsack DP | 0/1 Knapsack, Subset Sum |

| Interval DP | Matrix Chain Multiplication |

| String DP | LCS, Edit Distance |

Dynamic Programming vs Greedy Algorithm

| Feature | Dynamic Programming | Greedy Algorithm |

| Approach | Solves all subproblems | Chooses best at each step |

| Complexity | Higher | Lower |

| Accuracy | Always optimal | Not always optimal |

| Example | Knapsack (0/1) | Fractional Knapsack |

Intuition Behind DP

Think of DP like remembering past experiences.

Instead of solving the same problem repeatedly, you say:

“I’ve seen this before—I already know the answer.”

That’s the essence of Dynamic Programming.

Conclusion

Dynamic Programming may seem complex at first, but it becomes intuitive with practice. It’s all about:

- Breaking problems into smaller pieces

- Reusing solutions

- Thinking efficiently

Once you start recognizing DP patterns, you’ll notice that many difficult problems become manageable.

Learning Dynamic Programming is like unlocking a new level in problem-solving. At first, it feels challenging—but once mastered, it gives you a powerful edge in coding, interviews, and real-world applications.